In online communities, it is common for members to want to create Internet personas that enable them to express themselves and establish an online social identity. By allowing users to choose unique public display names to represent the personas they aim to create, you encourage repeat interaction and engagement with the community. While it is vital to encourage these things, it is also important to ensure public usernames remain appropriate for your environment. The first step in doing so is to identify your target audience. You will most likely want to prevent profanities in usernames and may also need to prevent personally identifiable information (PII) for COPPA compliance in the case of under-13 communities. To do so, you have the choice to implement an automated profanity filter, employ human moderation, or utilize both. This post will cover the limitations, overhead, and assessment of risk for each approach.

Profanity Filter Challenge

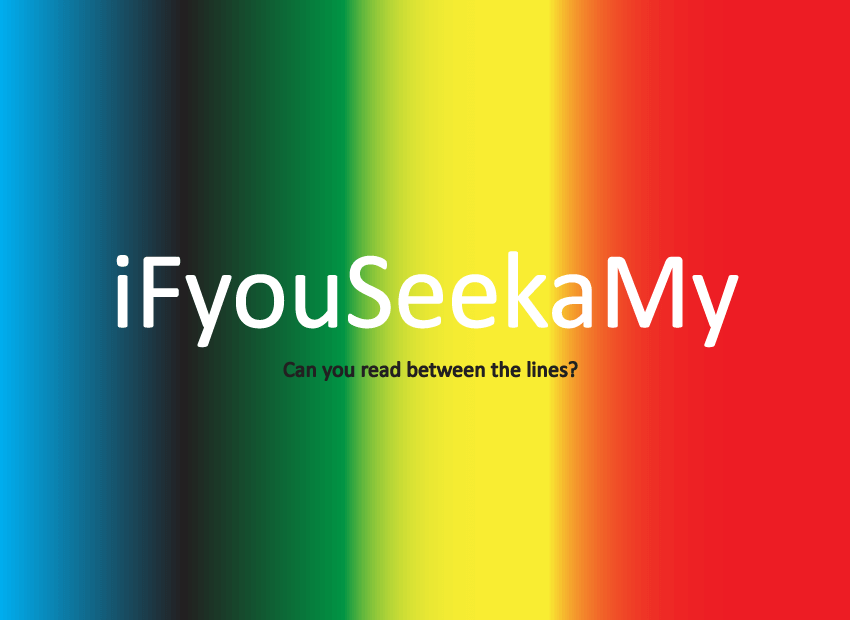

Implementing an automated profanity filter to monitor username creation has several benefits. A filter can block obvious profanities and prevent members from using their real name. (Refer to the following link for more on Blocking PII). However, usernames are analogous to personalized license plates. Sometimes the meaning of the letter/number combination jumps out at you immediately, and other times it’s not so obvious. Consider the following examples (say the words out loud if the meanings are not obvious):

Continue reading →

Inversoft is excited to announce the launch of our newest open source library - Prime.js. Prime.js is the JavaScript library that powers CleanSpeak. Prime.js is hosted at Github and you can visit the project website here: http://inversoft.github.io/prime.js/

Prime.js is different than other JavaScript libraries because it was built for object oriented JavaScript. Everything in Prime.js is namespaced and explicit JavaScript objects. We also embraced the new operator and the this variable making them first class citizens again.

Continue reading →

Last week was an adventure for all those who attended the Online Community Unconference in Mountain View, California. The Unconference is an event, (not a conference) where participants take the reins. A place for the new and seasoned community manager to truly engage with like minds to share their experience and insights.

Professionals from across the industry - managers, producers, executives, technology providers and investors - discussed the solutions and strategies they have used to nurture and develop their online communities. There is no one better to offer advice than those on the front-lines continuously tending to their own online communities.

Participants got all they could handle from the well-organized Unconference which included over 60 sessions in 7 hours. With over 150 attendees, it seemed everyone had something to give and gain from this enlightening event.

No other event allows the community manager a chance to create, guide and share face to face with other industry professionals on current and emerging trends.

Inversoft would like to thank organizers Bill Johnston, Randy Farmer, Gail Williams, Kaliya Hamlin, Scott Moore, Susan Tenby, Maria Ogneva and Mark Smith for all their hard work. Congratulations for pulling off a great event!

For your viewing pleasure we have provided an Online Community Unconference video teaser for all those who missed and attended this fantastic event.

To learn more about Inversoft and Online Safety follow us here @inversoft

Further Reading:

3 Ways Small Companies Rock

Online Community Unconference 2013

Online Community: 3 Types of User Communication

Continue reading →

Working with a smaller software company can be a big advantage to customers that require a mix of nimbleness, quality and responsiveness. This combination can overcome resource limitations to provide high value products and services.

Small companies are typically comprised of company founders and early employees who thoroughly understand the company’s mission, vision and product set. This motivated group is quick to comprehend customer needs and is empowered to move fast to deliver solutions. While large companies often have more resources upon which to draw, they are typically fraught with red tape and politics that bog down product development processes.

Continue reading →

One of the fascinating impacts of our now very social media environment is technology companies having to learn a whole lot about the best and worst of humanity – and, for their own and their users’ sake, about how to foster the best of it. Facebook, for example, has an engineering team working with empathy researchers, and this has direct impact on young users’ social experiences, wherever FB’s embedded in them.

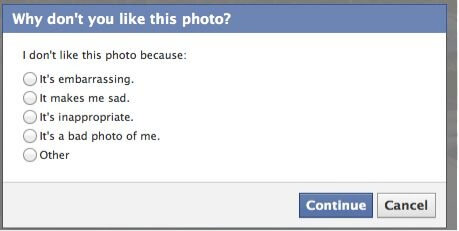

Psychologist Marc Brackett at Yale University, who developed a social-emotional learning (SEL) program for schools, has been working with Facebook on its social-reporting tools for 13- and 14-year-olds. [Social reporting is basically abuse reporting with additional options to "report" offending content or behavior to people who can help with the problem in "real life," which is typically the context of whatever goes on in Facebook.] What Facebook has set up for young teens, with the help of Marc and other researchers, is not only a social reporting process (which it calls a “flow”), but one that actually teaches them social literacy as they go through it.

Here’s part of Facebook’s reporting flow that, in a simple way, gets users to think about and accurately express what bothers them about a photo

Continue reading →