When you are building a game of any sorts, there is bound to be content generated by users. It can either arise from their activity, their progress or play a bigger role as a playable piece of content, such as maps, items, etc. In any case, you need to moderate the content that users generate and put into your games. In this guide, you will share some best practices for moderating and managing user-generated content (UGC).

Continue reading →

The following is an excerpt from CleanSpeak’s new whitepaper: Combatting Hate & Extremism on Your Gaming Platform. The link to the full whitepaper can be found at the bottom of the article.

Continue reading →

Community guidelines are the rules of the road on how to behave. Your community guidelines will help mold your community to your vision, and in turn promote a healthy discourse as well as user retention and growth.

Continue reading →

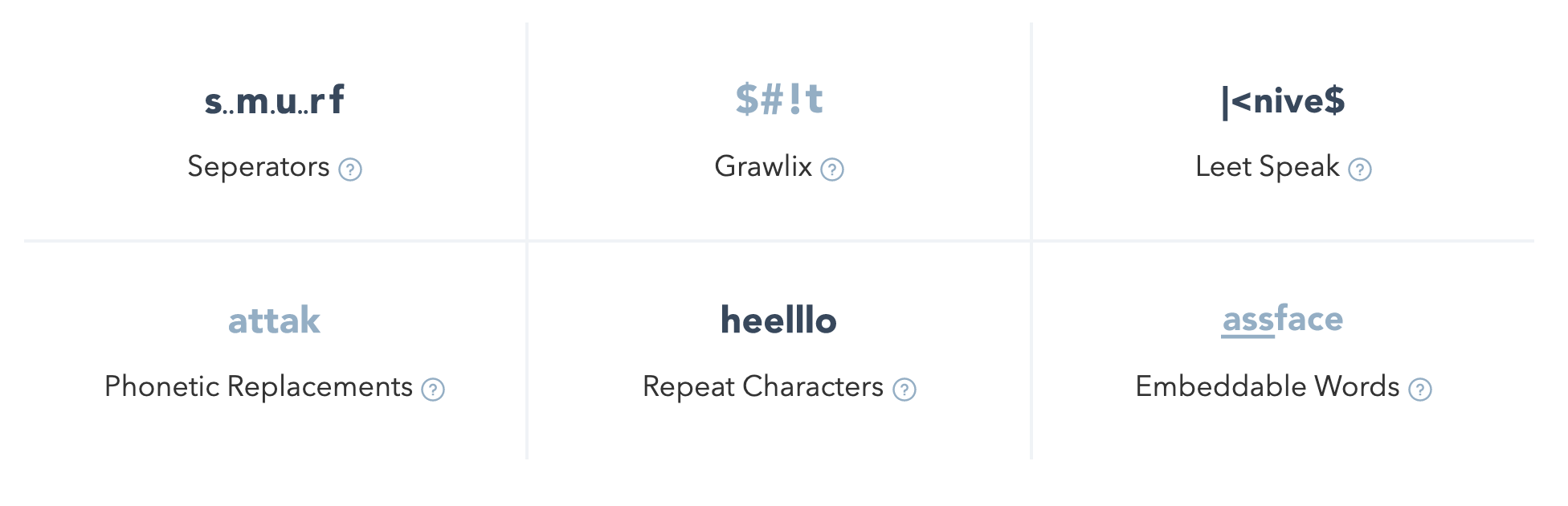

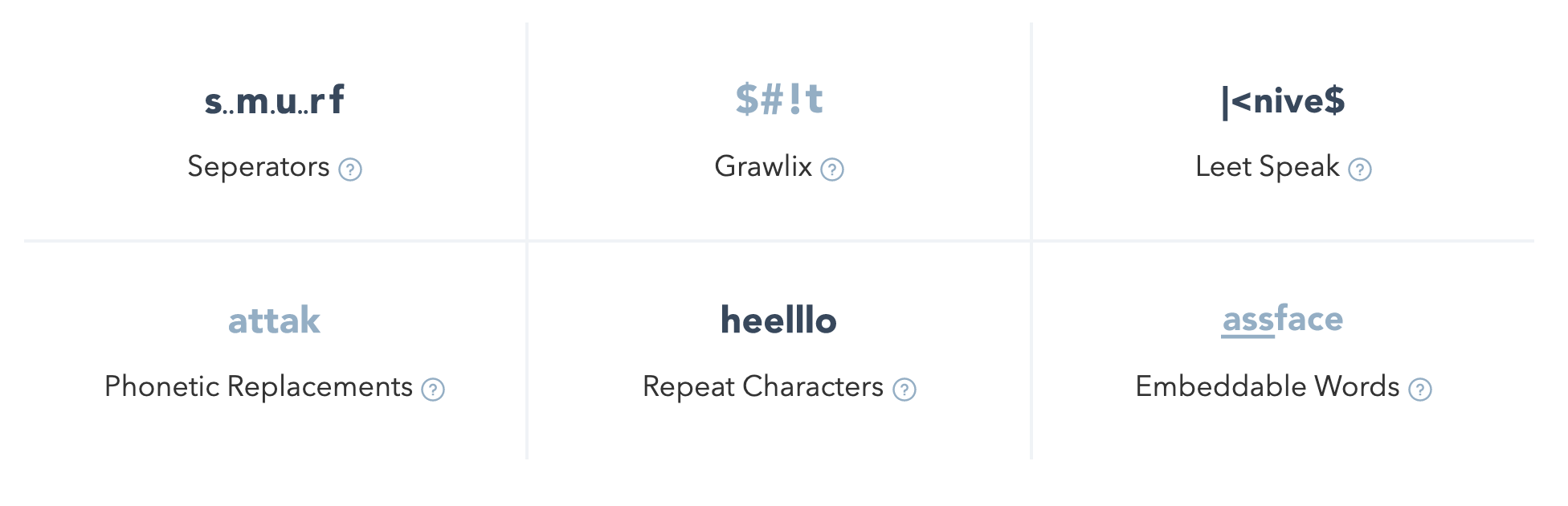

These profanity filtering and content moderation best practices include key platform requirements and essential tools for enterprise-scale advanced filtering systems.

Continue reading →

You may have heard of user-generated content (UGC) before, but what does it actually mean? UGC refers to any type of media produced by users rather than the company that owns or operates the platform where the media gets shared. Examples include YouTube or Facebook. Content such as photos, videos, comments, and product reviews are all examples of UGC.

Continue reading →