The short answer: No

The long answer: All online communities, including those targeted towards adults, can benefit from an advanced profanity filter. By looking at some of the key objectives of all online communities and how filtering profanity benefits each, we can paint a clear picture of why profanity filters aren’t just for kids.

Continue reading →

In online communities, it is common for members to want to create Internet personas that enable them to express themselves and establish an online social identity. By allowing users to choose unique public display names to represent the personas they aim to create, you encourage repeat interaction and engagement with the community. While it is vital to encourage these things, it is also important to ensure public usernames remain appropriate for your environment. The first step in doing so is to identify your target audience. You will most likely want to prevent profanities in usernames and may also need to prevent personally identifiable information (PII) for COPPA compliance in the case of under-13 communities. To do so, you have the choice to implement an automated profanity filter, employ human moderation, or utilize both. This post will cover the limitations, overhead, and assessment of risk for each approach.

Profanity Filter Challenge

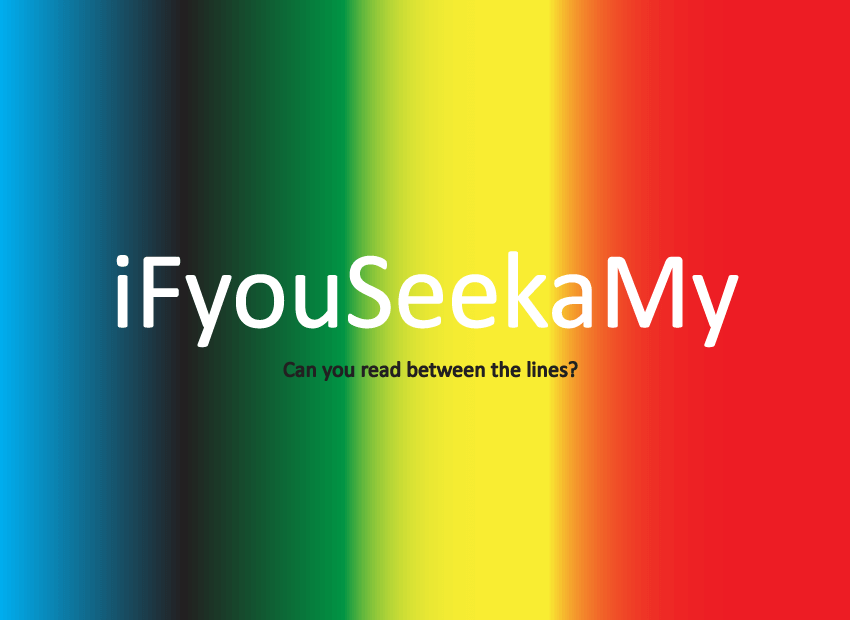

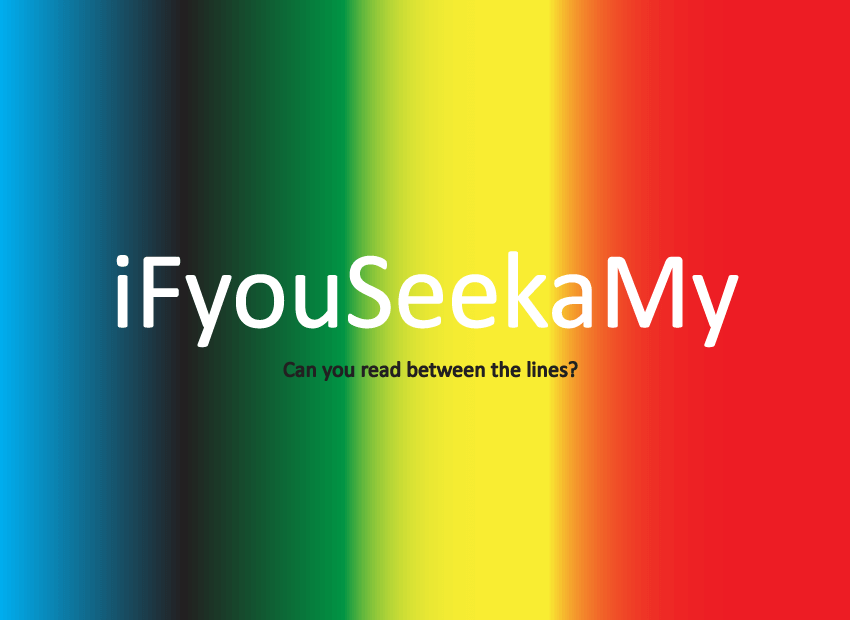

Implementing an automated profanity filter to monitor username creation has several benefits. A filter can block obvious profanities and prevent members from using their real name. (Refer to the following link for more on Blocking PII). However, usernames are analogous to personalized license plates. Sometimes the meaning of the letter/number combination jumps out at you immediately, and other times it’s not so obvious. Consider the following examples (say the words out loud if the meanings are not obvious):

Continue reading →

You recognize the potential value of implementing a profanity filter to keep your community clean and productive, but wonder, can it be trusted? Will the filter offer the efficiency you seek while maintaining the flexibility necessary for the ever-changing online environment? After all, even the most advanced language filtering techniques will produce false-positives or let creative slang slip through the cracks. The good news is there is an answer to improving profanity filtering effectiveness: Updating filter lists in real time. The ability to customize your profanity filter in real time is the key to gaining the security you seek, optimizing user experience and creating a successful community.

Protect Your Community Culture

Online communities commonly develop their own sub-culture enhanced by new and creative vocabulary specific to that culture. Had you heard of the terms uber, leet, or ftw prior to the rise of virtual worlds? This phenomenon should be embraced and supported by site owners as it strengthens the community by enhancing the online experience and increasing user engagement. However, new fun ways to communicate within a community also opens doors for new methods of harassment and bullying. So while it is important to allow community evolvement and creativity, you must also protect your members. A powerful tool for doing so is having the ability to update your profanity filter in real-time, which provides the following benefits:

Continue reading →

Communities are no longer restricted by walls or boundaries. People from all over the world can congregate and share their thoughts and opinions from the click of a button. A site owner has an inherent responsibility to protect users and prevent unwanted content. The chat filter is your first line of defense, but when multiple languages find their way in to the community, it can get confused and create false positives. Filtering multiple languages at the same time can quickly turn your leading advocates in to antagonists.

1. Word Collision

Word collisions occur when filtering multiple languages from a central black list. A word in English does not necessarily mean the same thing in German or Spanish. Filtering words and phrases in multiple languages within one community will create false positives. As an example, the word pupil (the center of the eye) is harmless. When an “a” is placed at the end, “pupila,” it becomes derogatory. The sequence of letters placed within a word can mean something harmless in one language and be profane in another. Be aware of the users in your online community and refine your filter based on the languages most commonly seen.

Continue reading →

We have been hearing from prospective customers that their profanity filters have been crashing their servers. Sure there are some good jokes about what people tend to do when servers go down, but in reality when servers crash users get angry. Angry users can have a big impact on your business. We have compiled two lists of suggestions that will help you prevent issues with your filter.

Picking a Good Filter

Picking a good profanity filter is important. Not only should you select a filter that has good accuracy and is customizable, but you should also pick a filter that can scale. Here are the 5 things you should look for to ensure your profanity filter won't crash your servers.

1. On-premise

The best way to reduce your risk of crashes is to use a filter that can scale. On-premise filters provide the lowest latency and the highest throughput. This means that even if you have massive spikes in traffic, the filter will keep up.

Continue reading →